How I Elevate AI Quality: Implementing Multi-Model Reflection with CLI-Driven Workflows

TL;DR: Large Language Models (LLMs) have “blind spots.” Multi-model reflection, orchestrated via flexible CLI tools, significantly enhances AI output quality by leveraging diverse AI perspectives for critique and refinement. This article offers a practical guide to implementing this advanced technique effectively.

The Challenge: LLM Blind Spots and the Need for Reflection

While Large Language Models (LLMs) offer immense potential, they inherently possess “blind spots”—areas where they struggle to identify their own errors, inconsistencies, or suboptimal reasoning. This limitation can lead to issues like factual inaccuracies (hallucinations) or subtle bugs in generated code, hindering AI output quality in critical applications. To mitigate this, the concept of reflection has emerged, where a model reviews its own output. However, a critical insight I’ve gained is that relying solely on a single AI model for self-correction often yields limited improvements, as its inherent blind spots persist.

The Solution: The Power of Multi-Model Reflection

To truly elevate AI output quality, a multi-model reflection strategy is essential. This approach leverages the diverse strengths of specialized AI models, enabling independent reviews and validations. By obtaining a “second opinion” from an external model, we can effectively identify issues the initial model might have overlooked, leading to significantly higher quality results.

Why Multi-Model is Superior:

Diverse Perspectives: Different models, trained on varied datasets and architectures, offer unique viewpoints and interpret information distinctly.

Overcoming Blind Spots: An external model is less likely to share the exact blind spots or biases of the initial generator.

Specialized Capabilities: Reviewer models can be selected for particular strengths, such as advanced web search for fact-checking, or deep code analysis for bug detection.

Enhanced Robustness: This strategy ensures a more robust and accurate outcome by cross-validating outputs.

This multi-model reflection strategy effectively overcomes the inherent limitations of any single AI, ensuring a more robust and accurate outcome.

CLI-Driven Workflows: Making Advanced Reflection Practical

Implementing advanced multi-model reflection techniques demands a flexible and powerful interface, and this is where Command-Line Interfaces (CLIs) truly shine. For those new to CLIs, these text-based interfaces offer unparalleled control and automation capabilities, allowing direct interaction with your computer and its tools. The Gemini CLI, in particular, stands out as an open-source AI agent designed to bring the power of large language models directly to your terminal. It facilitates natural language interaction and seamless integration with local files and custom tools, making it an ideal platform for advanced workflows like multi-model reflection. You can find installation instructions and further documentation for the Gemini CLI on its official GitHub repository: https://github.com/google/gemini-cli

CLIs offer several key advantages that make them invaluable for practical AI reflection:

Natural Language Control: CLIs, especially AI-powered ones, allow for expressing complex intentions in natural language, liberating users from memorizing intricate syntax. This dramatically lowers the barrier to entry for orchestrating sophisticated AI workflows.

Local File Access: The seamless integration with the local filesystem means files can be fed directly to the CLI without the inconvenience of uploading them to a cloud service, ensuring efficiency.

Customization and Tool Integration: CLIs allow for progressively building and refining custom guidelines, integrating specialized external AI models as tools. For instance, my cli_tools repository provides examples of such integrations, making the multi-model reflection process highly customizable and efficient for specific review tasks (e.g., fact-checking, style-guide adherence).

How It Works: A Concrete Multi-Model Reflection Workflow

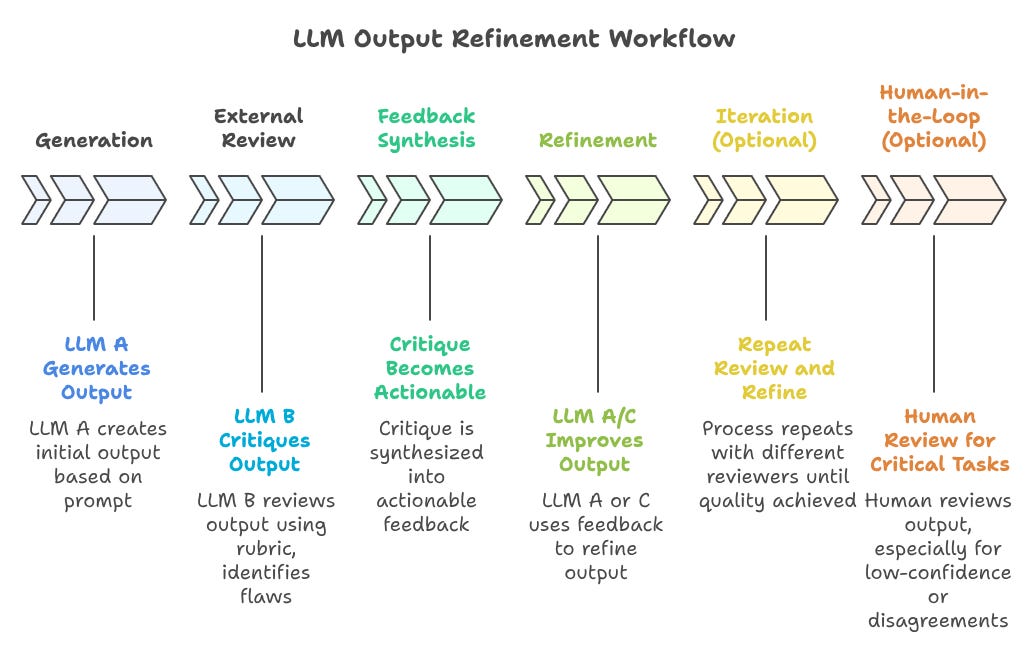

The core multi-model reflection workflow involves an iterative loop of generation, external review, and refinement. Conceptually, this process unfolds as follows:

Conceptual Workflow:

Initial Generation: An LLM generates an output based on a given prompt.

External Review: A different, specialized LLM (the reviewer) critiques the output.

Feedback Synthesis: The critique is synthesized into actionable feedback.

Refinement: The original or another LLM refines the output using this feedback.

Iteration (Optional): This process can be repeated for further quality improvement.

Human-in-the-Loop (Optional but Recommended): A human review step can be integrated for critical tasks.

Visualizing the Workflow:

Case Study: Multi-Model Reflection in Action with the Gemini CLI

To illustrate the power of multi-model reflection, I’ve prepared a concise video demonstration of my workflow using the Gemini CLI. This example showcases how I orchestrate different AI models to achieve a more comprehensive and reliable understanding of current events.

My process begins with a direct query to the Gemini CLI for the latest AI news. The CLI, acting as my intelligent assistant, first leverages its integrated GoogleSearch capabilities to provide an initial, broad overview of recent developments. This rapid first pass gives me a foundational understanding.

I then introduce a specialized external tool: grok_tool.md. This tool, part of my broader cli_tools repository and integrated seamlessly into my CLI environment, allows me to tap into a different AI model known for its distinct strengths, such as real-time information access and unique perspectives.

The power of multi-model reflection unfolds as the Gemini CLI receives and processes the fact check report from the specialized Grok AI tool. This demonstrated a process for potential iterative refinement, orchestrated entirely through the CLI.

Conclusion: Elevating AI with CLI-Driven Multi-Model Reflection

The journey to truly reliable and high-quality AI outputs is paved not by single, monolithic models, but by intelligent orchestration of diverse AI perspectives. As demonstrated, the multi-model reflection technique, made practical and accessible through CLI-driven workflows like those I employ with the Gemini CLI, represents a significant leap forward. By systematically leveraging different AI models for critique and refinement, we can effectively overcome inherent blind spots, enhance robustness, and gain an unprecedented level of control over AI-generated content. This approach transforms complex tasks into intuitive interactions, empowering developers and engineers to build more trustworthy and sophisticated AI solutions.

I encourage you to explore the possibilities of multi-model reflection in your own workflows. Consider experimenting with the Gemini CLI and integrating specialized tools, perhaps starting with the examples in my cli_tools repository, to experience firsthand how this strategy can elevate your AI quality.

Please comment, and share if you feel compelled to do so.

I appreciate you reading. Thanks to the new subscribers! ✌

For those who are more active on other platforms, you can also find me on LinkedIn or X.